Which of these sums up your view on content production?

“Content is about quality, not quantity. We should be producing high value, authoritative content regularly, not publishing lots of short posts. Less is more.”

“Winning in digital media now boils down to a simple equation: figure out a way to produce the most content at as low a cost as possible.” (Digiday 2013)

Do you agree with first statement? Me too, until recently. But now I think we could be wrong.

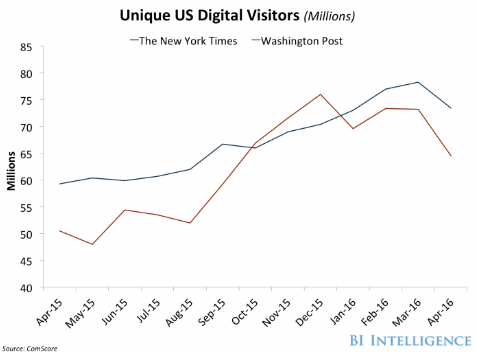

The Washington Post now publishes around 1,200 posts a day. That is an incredible amount of content. My initial reaction when I read the statistic was ‘surely that is too much, the quality will suffer, why produce so much content?’ The answer seems to be that it works. The Post’s web visitors have grown 28% over the last year and they passed the New York Times for a few months at the end of 2015.

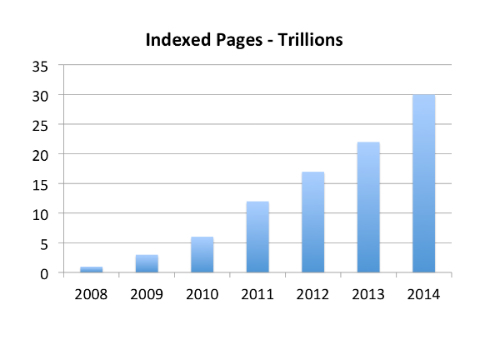

The growth in published content is just one part of the long term strategy that Jeff Bezos has put in place to drive up the Post’s audience. The content strategy appears similar to the approach Bezos adopted at Amazon for long tail audiences. Many other sites and authors are also increasing their volume of published content. Across the web we are seeing significant content growth, the number of Google indexed pages has grown from 1 trillion to 30 trillion in the last 7 years.

This growth in content is likely to accelerate for many reasons including more companies adopting high volume strategies like the Post, growth in automated content, increased volumes of short form content, lots more video content, increased long tail niche content and simply because there will be more internet users with access to easy to use publishing tools. The data suggests the number of new posts and articles published next year may be double that published this year.

In Cleveland this week Content Marketing World will be exploring the future of content. This is my small contribution, a look at why the future is more not less content and what it means for your strategy and operation.

Here's what I'll be covering:

- The current state of content

- What is Bezos doing at the Post?

- The long tail theory

- Long tail content

- Using robots to write long tail content

- The short form content opportunity

- Video and audio content

- What are the implications of content growth?

- The impact on us as readers

- Examples on how to filter content

- Final thoughts

The current state of content

Isn’t content all about quality not quantity?

I know if you are in content marketing, there is a lot of advice about quality over quantity. Provide something of value, research it well, make it helpful. It is a strategy I have followed at BuzzSumo. I spend a lot of time researching posts, as I did with this one, aiming to produce authoritative, long form content that provides insights which, hopefully, are helpful to marketers. This takes time and I produce around one to two posts a month.

I am now thinking I may have got this all wrong.

Haven’t we reached peak content?

No, not by a long way.

Last week I read a post that argued the future of content marketing is ‘less content’. The author predicts “content teams will be producing far less content” albeit content that is “far more interesting.” I think whilst it is true that content will take a wider range of forms, including interactive content, the future is not less content but the opposite.

My reasoning is based on a number of factors including the effectiveness of the strategy adopted by the Post and others.

Firstly, the growth in content continues unabated. It is not easy to track the number of new articles being published each year but we can use some proxies, for example, the number of content items being indexed by Google and the number of posts published on WordPress.

As we noted above the number of pages Google has indexed over 7 years from 2008 to 2014 has increased from 1 trillion to 30 trillion.

That is an increase of 29 trillion pages in 7 years. The number of net additional pages indexed by Google each year appears to be increasing, it was 3 trillion in 2010, 5 trillion in 2012 and 8 trillion in 2014.

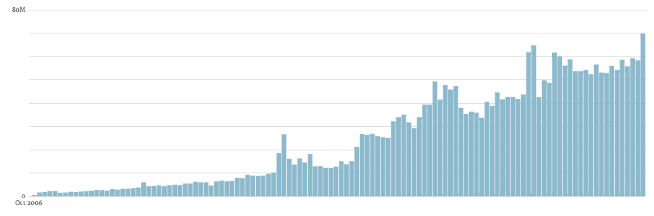

WordPress publish data on the number of posts published by blogs they host, or blogs that use their Jetpack plugin. This is just a single content platform but it is very popular and it gives us an indication about content growth. The figures from December 2006 to July 2016 are as follows:

Again there appears to be a steady growth in the volume of published content. In July 2016 nearly 70m new posts were published on WordPress. Over 2m each day.

It is easy to say most content is poor and can be ignored but quality content is also increasing. In addition to the high volume publishing of news sites such as the Post, in the area of science there are at least 28,100 active scholarly peer-reviewed journals publishing over 2.5m new scientific papers each year. The volume of content being published has been growing, with 4-5% more publishing scientists each year, and evidence publication growth is accelerating.

There is no indication from the data that we have reached peak content, in fact, the trends indicate that the volume of published content is increasing. Levels of internet access across the world are still increasing as are literacy rates, this combined with easier creation tools, would suggest we may have some way to go before we hit peak content.

My view is that we could see a doubling in the number of posts published next year. This is simply taking the current growth in published content and understanding how this growth could be accelerated by factors such as:

- Higher numbers of internet users and growing literacy

- Falling costs of content production and distribution

- Easier content production with simple to use tools, particularly video

- The success of high volume strategies being adopted by sites such as the Post and others, encouraging more businesses to adopt similar strategies

- A significant growth in automated, algorithm driven, content creation

Let’s start by looking at the high volume content strategy being adopted by the Post.

What is Bezos doing at the Post?

Jeff Bezos is a smart guy, and since he became the owner at the post, their traffic has grown. In the last year from April 2015 to April 2016 their visitors grew 28% and from October to December 2015 they had higher numbers than the New York Times.

I wanted to understand how Bezos and the team has achieved their visitor growth. There appear to be a number of factors including:

- Data – Bezos employed a group of data scientists to analyze the content that gains traction

- Technology – The Post developed software called Bandito that allows editors to publish articles with up to five different headlines, photos and story treatments, with an algorithm deciding which one readers find the most engaging.

- Headlines – Using data and technology the Post has developed more viral headlines, which is arguably different from clickbait if there is substance to the content

- Video – The Post has increased its use of video

- Content growth – The Post has adopted a content strategy which involves producing a high volume of content aimed at engaging a long tail of niche interests

I will cover this strategy in more detail in a future post, as content marketers can learn a lot from Bezos and the Post. However, what I want to focus on here is their strategy of increasing the volume of content, and more specifically long tail content.

The long tail theory

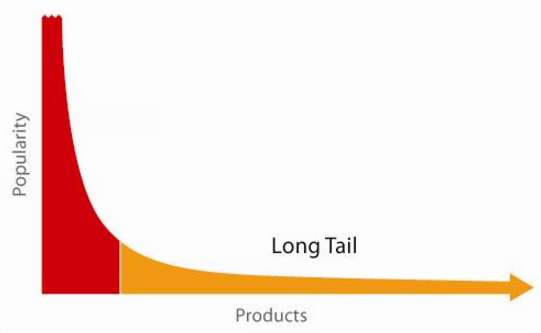

For those that are not familiar with the long tail theory it was first codified for me by Chris Anderson, editor of Wired Magazine. In essence the theory sees a shift away from a focus on a relatively small number of “hits” (very popular products) in certain industries and focuses instead on the huge number of niches that exist (the long tail).

Amazon was a classic long tail company that started to cater for the niche markets. The long tail theory goes something like this.

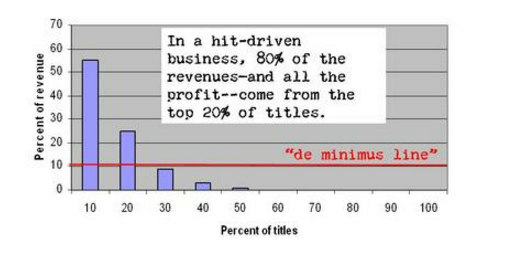

Popular books such as the New York Times best sellers sit in the red area and most book shops historically had a heavy focus on stocking these popular books as they knew they appealed to a broad majority of their audience. In this traditional hit driven business it has been estimated that 80% of revenues and nearly all profits came from the top selling products.

Whilst everyone recognised the potential of the long tail it was very expensive to produce, store and distribute products to these smaller niche audiences.

With the advent of the internet, Amazon lowered the costs of storing and distributing books and offered books to the long tail audience. Whilst the individual demand for a book in the long tail is much lower than for the best sellers, collectively there is a huge demand from people for niche or specialist books. The revenues from the long tail could actually be much larger than the revenue from popular titles.

Back in 2005 Chris Anderson noted how a new company Netflix, was changing the model and how this company at that time was offering a catalogue of DVDs that was much greater, over 8 times greater than the typical Blockbuster store, and how a significant and growing portion of their revenues was from DVDs not available in stores, the long tail.

As the costs of production, storage and distribution fell, particularly with online and digital products, it became economically attractive to provide products for the long tail niche audience, in fact revenue from the long tail became greater than the hits because the tail was very long indeed. Companies like Amazon and Netflix were arguably some of the first long tail companies.

Why is this relevant to content?

Long tail content

It seems to me Bezos is taking the same long tail approach to content at the Post. Of course we all want the big content article that garners millions of views but traffic for thousands of niche articles can collectively add up to a lot more traffic overall.

By creating over 1,000 pieces of content a day you are more likely to cater for demand in the long tail for specific niche content or simply to produce content that engages a wider audience. The Guardian has taken a similar approach and publishes similar volumes of content to the Post. The Huffington Post was reported back in 2013 to be producing over 1,200 articles a day. Sites such as BuzzFeed have also increased their content production, the Atlantic recently reported the following figures:

April 2012 BuzzFeed published 914 posts and 10 videos

April 2016 BuzzFeed published 6,365 posts and 319 videos

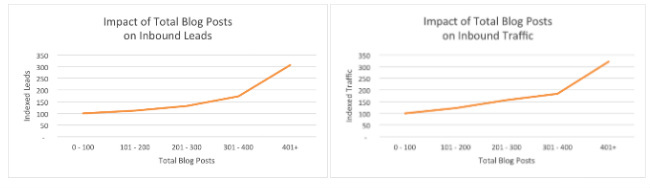

That is a significant growth in content publication. The reason appears to be simply that more content on these sites drives more traffic. This appears to be the case in both B2B and B2C. In 2015 Hubspot looked at the data from their own customers and found that both traffic and leads increased with higher content volumes.

The impact of content volume was greater in B2C but it was also significant in B2B.

Increased traffic and leads does not necessarily mean a good return on investment but the cost of content production is falling and distribution costs are very low, enabling more content to be produced. We are now seeing a high volume approach being taken by many established B2B sites and influencers.

This content growth is not a surprise, Doug Kessler predicted in an exponential content increase in 2013.

How many articles can you write about inbound marketing or related topics? 100? 200? 500? Well, Hubspot published over 4,000 in the last 12 months alone.

Does this long tail approach work in B2B? Let’s take Hubspot’s approach and compare it to say Social Media Examiner. Two of the big sites in the marketing industry.

Social Media Examiner are no slouches when it comes to publishing content, they published over 400 posts in the last year and averaged over 3,900 shares per post, which is incredibly high, even discounting the automated shares by bots.

Hubspot by comparison published over 4,000 posts, ten times as many as Social Media Examiner in the same period. Their posts were shared a lot less on average, almost 600 shares a post.

So who has the better content strategy? My instinct until now has been that you are better off being Social Media Examiner than Hubspot. You can provide higher quality, give more promotion to each post, drive higher average shares and traffic; and you get a much better return on your content investment. However, Hubspot’s articles received 2.8m shares in total compared to the 1.8m shares of posts on Social Media Examiner. That is 1m more shares, over 30% more. We don’t have traffic figures for these sites but I would anticipate Hubspot also received similarly higher levels of traffic.

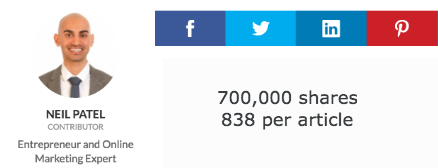

This high volume strategy has also been adopted by influencers such as Neil Patel. Over 800 articles authored by Neil have been published in the last 12 months. That is a lot of content but his articles have averaged 838 shares per article, more than Hubspot has achieved, and in total Neil’s posts have achieved approximately 700,000 shares. That is almost half the shares achieved by a major site such as Social Media Examiner.

A high volume content approach probably only works if you have built an audience with authoritative, quality content first. However, if you are an established brand should you be looking to adopt the high volume strategies that work for the Post, Hubspot and Neil Patel. My instinct is we will see others adopting similar strategies and increasing, not decreasing, their content output.

My previous reservations have centered on how difficult and costly it is to produce quality content on a regular and consistent basis. But it might be that I have been guilty of old school thinking when it comes to content volume and long tail content.

Using robots to write long tail content

The Post announced this summer that it would use robots to write many of its Olympics stories. These posts would still involve human editing but the algorithm created the initial story. It is easy to be critical of using algorithms to write stories but in this way the Post can use human journalists efficiently and cater for the demand for long tail content by niche audiences. In areas like sports we are primarily talking about robots writing short articles that report the score, who scored, the time of scores, the current league position etc. This data can be easily used by algorithms to write short reports, which may be all someone requires. Journalists can write the more in-depth stories and human stories, and leave the short reports to the robots.

In my view this makes sense. I don’t always need a perspective or in-depth article on movements in the financial markets, on new Google announcements or the latest soccer scores. Content writing algorithms are also getting better by the week. Don’t believe me, go and look at the text written by Narrative Science’s algorithms or at Automated Insights.

At this point in time it might seem far too complex and expensive for sites to create algorithms to create content. However, these costs will fall and algorithms will become commoditised, open sourced and available to everyone. In much the same way as is happening in machine learning. I anticipate there will be significant growth in robot written content, which whilst relatively small, will be a growing proportion of all online content.

The short form content opportunity

My personal approach has been to create well researched, authoritative and long form content. I have felt good about this as the data consistently shows that long form content gets more shares on average. However, statistics can be misleading. When I looked recently at the most shared content published by marketing and IT sites, the data confirmed that on average long form posts achieved more shares. But when I looked in more detail at the 50 most shared posts, 45 of them were short form and under 1,000 words. Thus people are very happy to share short form content and given the pressures on everyone’s time may prefer short form content.

How do we reconcile these two facts, namely that longer form content gets higher average shares but the top most shared content is made up overwhelmingly of short form content? In my view it is because there is simply a lot more, very low quality short form content. Cynically it is easier to produce poor quality short form content than poor quality long form content. This volume of poor quality short form content drags down the average shares for all short form content relative to long form content.

I personally think there is a big opportunity for short form content and I aim to adapt my strategy to focus more on repurposing and republishing short form versions of my research that focus on specific issues. These could be focused around just a single image or chart.

Short form content could also take the form of serialisation. Most of Charles Dickens’s novels started as weekly or monthly instalments. They made the works more accessible and built an audience that eagerly anticipated the next installment through his episodic approach and use of cliff hangers. A serialised set of articles can still become a book or long form content. Short form content doesn’t necessarily mean a reduction in quality. Particularly if you are serialising your long form piece into short form episodes.

If others think in the same way we may see a significant increase in short form content.

Video and audio content

It has never been easier to produce video content and the effectiveness of video means we are likely to see increased video production. In many ways audio and video content can be produced in less time and more efficiently than written content, even with transcripts.

What are the implications of content growth?

Just because the world is going to produce a lot more content doesn’t mean you need to start trebling the volume of the content you produce. However, you do need to reflect on your strategy in a world of ever increasing content.

It is easy to decry and criticise higher volumes of content, particularly as we are likely to see a lot more poor quality content or even Crap as Doug Kessler says. However, as Mark Schaefer, who coined the term ‘content shock’ points out, for many consumers more content is a good thing. So if you are passionate about the Men’s Trampoline (yes, it is an Olympic event) there is more likely to be a series of long tail articles keeping you updated. The Washington Post did actually publish an article on the American who finished 11th in the Men’s Trampoline, a classic long tail post.

Mark Schaefer points out that in a world of content shock you will get less individual attention for your posts. This is simple math at one level, if content production outstrips growth in social sharing, we will see shares per article decrease on average. However, it does not mean content marketing is not a sustainable strategy. It is ultimately about return on investment. If you can lower content production and distribution costs and engage a small audience which converts you can still achieve a return on your investment.

What is clear, however, is you can’t just start writing 1,000 articles a day. Google would probably be very unhappy if you did. You need to build some authority and an audience over time. However, there is a question about the point at which you increase the volume of your content and leverage the brand you have built.

Quality will still matter, even in a world of high volume content. It may not need to be long from but it does need to meet a quality threshold. Brands should produce content that is always worth consuming, albeit it might be consumed by smaller niche audiences.

A key challenge will be lowering the cost of producing content. Is it different writers, better research and creation tools, more content curation, guest bloggers, more short form content right through to automation.

Can you use algorithm written content to satisfy particular niches? For example, it would be a simple task for us to create a weekly article on the most shared fashion content or automotive content written by an algorithm. While this might sound like a race to the bottom, there is an argument that quality for these types of articles is not about the prose or insights, but about the content being timely and relevant for the audience. Relevance wins over quality for this form of content and bots will hit their deadline every time.

In a world of high volume content your amplification strategy will be more important. Producing quality content and hoping people will find it will no longer work, if it ever did. Content promotion via your own teams and influencers, via email to your subscribers, via paid ads and via social will be critical.

The impact on us as readers

It seems to me one of the biggest challenges of content growth is for us as readers or consumers of content. There will be more articles than ever to work through. You will need good filters so you can be aware of key developments and news but not overwhelmed by content. The difficulty as Doug Kessler says is that poor content may increasingly look on the surface like good content, as everyone learns how to write effective headlines.

We will all need to make smarter use of curators, those people that read widely, that keep up-to-date on specific issues and share articles and views with the rest of us. These people may curate on blogs, content hubs or simply share articles via Twitter. No one can read everything, we will need to rely more than ever on others reading and sharing articles. Teams will have to learn how to effectively leverage their members to curate and filter content.

Examples on how to filter content

The following tools and filters are personal to me but I think they provide an indication of what we all need in some form:

Content alerts

There is no way you can read all the posts published today to identify what people are saying about a particular topic or brand. You need to use tools and robots. I use BuzzSumo alerts, for example I have an alert for mentions of BuzzSumo and I get alerted every time we are mentioned in a web article. I also do this for specific topics such as data driven marketing.

Content aggregation and filtering

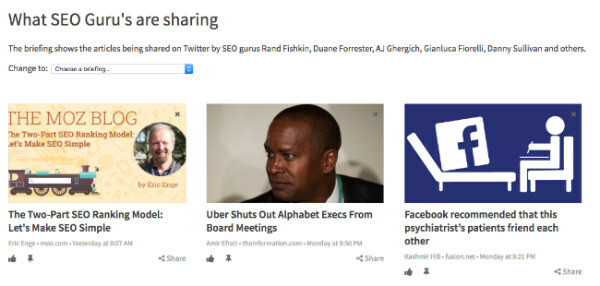

I like to aggregate all relevant new articles each day into specific briefings that I can skim and decide which articles to explore in more depth. For some briefings I use keywords, for example here is my briefing on Negative Interest Rates. For other briefings I simply pull together content from the experts I respect. Here is my daily briefing from the best entrepreneur, SaaS and StartUp blogs and in this one I have simply added the twitter handles of various SEO gurus to get a daily briefing on what they are sharing.

Team sharing

On Anders Pink I am in a team with my colleagues and they help me filter by upvoting and commenting on articles. This helps me decide what to read each day. I think leveraging your colleagues in this way is something every team needs to do. It is hard to stay informed in a fast moving world but your colleagues, and your social networks, can help. Your colleagues are likely to have a finely tuned antenna for relevant industry and competitor news.

Trending content

I use BuzzSumo’s trending dashboard to see what is trending each day i.e. what is resonating in my industry. Trending content does not mean good content, as was exposed by Facebook’s recent algorithm issues, but it is useful to see what content is resonating.

Final thoughts

Yes, the irony is most people will not have read to this point. The data shows that most people only scroll down through 50% of an article and 55% of you not reading will have left within 15 seconds according to Chartbeat.

I should probably have serialised this post and written a number shorter posts on key aspects such as a chart on content growth, a piece on the Post’s content strategy or an article on long tail content. Maybe those shorter pieces would have kept your attention and motivated you to read the next article.

Categories

Content MarketingCategories

Content MarketingThe Monthly Buzz⚡

Subscribe to BuzzSumo's monthly newsletter to:

Stay up-to-date with the best of the best in content marketing 📝

Get data-informed content, tips and tidbits insights first 👩🏻💻

Read top shared content by top marketing geeks 🤓

Try

Enter any topic, term or url to search to see BuzzSumo in action. It’s free!

100% free. No credit card required.